Pair sources before you lock an answer

Read legends, scales, units, and captions together—decide whether evidence supports a regional trend or a misleading aggregation inside one polygon.

Data Reliability and Bias in AP Human Geography explains how this topic appears across places and scales. Use it to interpret map evidence, compare spatial patterns, and write precise AP-style geographic explanations.

Practice with real AP Human Geography examples, compare spatial evidence across maps, and review with 22 flashcards plus 16 AP-style questions with explanations.

Learn in 7 mins · Practice in 10 mins

Data reliability means results stay consistent and trustworthy for the question you are answering with a map or table. Bias skews who or what gets counted—missing samples, old vintages, or uneven coverage—so conclusions drift until you read sources, margins of error, and legend units carefully.

College Board expects you to treat geographic evidence like a cautious researcher, not like a headline. Every census tract table, survey banner, smartphone ping, and shaded choropleth arrives with history: who counted, who could not respond, what scale hides, and which cartographic choices steer the eye. Data reliability asks whether those numbers deserve trust for the claim on the table. Data bias asks whether the collection path systematically favors some people or places while muffling others. When you can separate reliability problems from bias problems—and tie both to missing voices—you sound like a geographer on exam day.

This microtopic sits inside AP Human Geography Unit 1.2 on geographic data. You will keep circling back to it while reading about quantitative geographic data, survey data and sampling, census data, or geotagged data. Think of reliability-and-bias literacy as the immune system that keeps those lessons honest.

Practice narrating limitations aloud: first restate the prompt’s conclusion, then poke one hole using source or sample language, then explain how the conclusion shifts once you account for the hole. That cadence mirrors high-scoring FRQ paragraphs and keeps MCQ distractors from tempting you.

Students aiming for a 4 or 5 should memorize the S-S-S-T-M checklist (Source, Sample, Scale, Time, Method), rehearse two vivid bias stories (subway survey + Instagram tourists work well), and finish every critique with an equity consequence—who loses funding, representation, or services when planners trust skewed inputs.

Transparent methods, representative coverage, recent vintage.

Undercounts, non-response, skewed sampling frames.

Data reliability measures how accurate, consistent, complete, and trustworthy geographic evidence is for the question you need to answer. Data bias means the evidence systematically favors, excludes, overrepresents, or underrepresents certain people, places, groups, or outcomes—so maps and statistics mislead even when they look precise.

A recent census count from a national statistical agency is usually more reliable for studying broad population distribution than a hobbyist’s online poll with twenty clicks. A transportation survey conducted only at a downtown subway station is biased because it overrepresents riders who pass that turnstile and underrepresents drivers, cyclists, walkers, suburban commuters, and remote workers—people whose routes never intersect the sampling spot.

This topic ties together everything in Unit 1.2. Whether you work with quantitative data, survey data, census data, or geotagged data, every source carries potential reliability limits. The habit of evaluating evidence separates confident scorers from students who treat digits as neutral truth.

Definition: Data bias happens when data unfairly favors, excludes, overrepresents, or underrepresents certain people, places, groups, or outcomes. Biased data can produce misleading geographic conclusions even when calculations are mathematically correct.

Simple example: A transportation survey taken only at a subway station may overrepresent people who use public transit and underrepresent people who drive, bike, walk, or work from home. Response totals do not rescue the design—the sampling frame skipped entire mobility cultures.

Geographers justify funding, redraw boundaries, and argue about equity using quantitative claims. If the evidence underneath those claims is weak, then recommendations may underserve real neighborhoods. AP Human Geography treats reliability critiques as civic skills, not nitpicks.

For example, a city might rely on spatial data to decide:

If the data behind those choices is biased or unreliable, some communities may receive fewer seats at the table—literally and politically. Your FRQ paragraphs should echo that stakes-aware tone.

When AP Human Geography asks you to evaluate data, rotate through five prompts bundled as S-S-S-T-M: Source, Sample, Scale, Time, Method. Mentioning one lens with precision beats vaguely calling data “bad.”

| Letter | Question | What to look for |

|---|---|---|

| S — Source | Who collected the data? | Government agency, university lab, smartphone app, volunteered social feed, anonymous forum poll |

| S — Sample | Who or what was included? | Random draw? Stratified coverage? Or convenience sampling beside one landmark? |

| S — Scale | At what geographic scale was data collected and mapped? | National averages hide hyperlocal contrasts; block-level detail may miss regional structure |

| T — Time | When was the data collected? | Fast-changing places break older vintages—migration corridors, disaster zones, boom suburbs |

| M — Method | How was data gathered? | Census enumerator, phone survey, online panel, GPS traces, satellite classification—each pathway introduces distinct skew |

If a stimulus highlights limitations, anchor your sentence to one category: “This exhibits sampling bias because…” performs better than “the survey seems unfair.” Repetition builds fluency—practice aloud until S-S-S-T-M feels automatic.

The honest AP stance: No dataset is flawless. Even flagship censuses admit coverage gaps. Credit arrives when you pinpoint what is missing or distorted and spell out how conclusions soften once limitations surface.

Memorize at least four of these six labels so you can drop precise vocabulary under pressure.

What it is: Sampling bias occurs when the people or places selected for collection do not represent the broader population you intend to describe.

Example: A city asks how residents commute but surveys only riders at a downtown bus stop. Riders appear overrepresented while drivers, cyclists, pedestrians, hybrid commuters, and remote workers fade from view.

Strong AP explanation: “The evidence may be biased because the sample came only from people at a bus stop. That overrepresents public transit users and underrepresents residents who rely on other mobility choices, so citywide modal share estimates inflate bus reliance.”

For more on sampling frames, visit survey data and sampling.

What it is: Technology bias appears when data pathways require devices, bandwidth, accounts, or literacy that segments of the population lack.

Examples: Smartphone traces skip individuals without phones; social feeds overweight chronically online groups; app delivery logs miss cash-heavy informal markets.

AP-style paragraph: “Instagram-based tourism metrics spotlight younger visitors who geotag nightlife districts. Older travelers who spend quietly offline barely register, so tourism planners might misunderstand spending geography.” Pair this critique with the dedicated geotagged data guide.

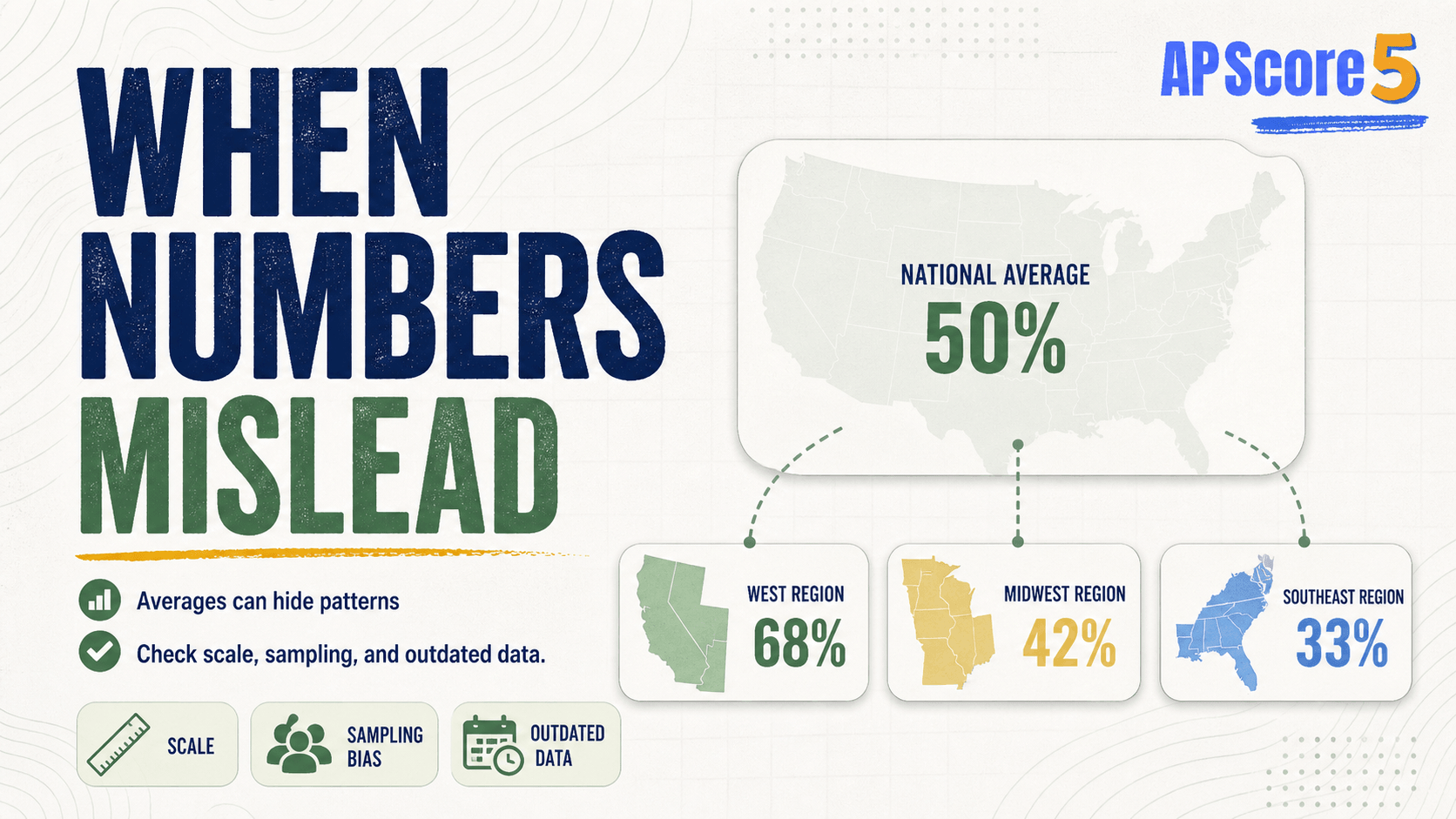

What it is: Patterns shift when you aggregate across geographic scales. National averages can cloak municipal poverty belts or prosperous micropolitan hubs.

Example: A country may report high mean income while interior regions remain distressed.

Exam-ready sentence: “National-scale summaries hide regional inequality because averaging smooths contrasts between urban cores and peripheral towns.”

What it is: Evidence collected before housing booms, refugee arrivals, factory closures, or wildfire seasons may describe an obsolete geography.

Contexts where freshness matters: Fast-growing metros, migration corridors, gentrifying blocks, disaster footprints, epidemiological waves, land-cover conversions.

Example: Using 2010 childhood counts to allocate 2026 classroom seats in exurbs risks underestimating young families now priced into peripheral towns.

What it is: Who opts in—or refuses—often differs systematically from those silent.

Examples: Voluntary web polls attract motivated users; robocalls miss renters who avoid unknown numbers; kiosk surveys skip night-shift workers racing to daycare pickups.

AP framing: “Nonresponse bias may emerge because renters without broadband submit fewer online forms, so homeowner perspectives overweight results.”

What it is: Maps communicate authority, yet every symbology decision encodes editorial judgment.

Mapmakers choose: Included variables, omitted territories, color ramps, classification breaks, projection distortions, scale bars that exaggerate density, icon emphasis.

Example: A choropleth map may rely on dramatic hue contrasts so modest statistical differences resemble crises.

| Data type | Common reliability issues |

|---|---|

| Census data | Undercounts among homeless residents, skeptical households, remote settlements; categories evolve slowly; intercensal drift between official releases |

| Survey data | Sampling bias, nonresponse bias, technology bias, ambiguous wording, translation gaps |

| Geotagged data | Smartphone divide, self-selection among posters, tourists dominating nightlife pings, uneven GPS accuracy indoors |

| Quantitative aggregates | Outdated denominators, coarse bins masking inequality, ecological fallacies when inferring individuals from zones |

| Maps | Color psychology, scale compression, projection bias, cherry-picked baselines |

| Field observations | Observer bias, weather-limited site visits, snapshot timing |

| Remote sensing | Cloud cover gaps, mixed-pixel uncertainty in coarse imagery, calibration drift across sensors |

Deep-link into specialized guides when you need worked examples: quantitative geographic data, survey data and sampling, census data, geotagged data, or remote sensing imagery reliability trade-offs.

Yes—official counts remain the gold standard for nationwide totals yet still embed structural coverage gaps.

AP-ready illustration: If wary households skip census forms, neighborhood population may land low on paper. Political representation and formula-funded programs keyed to headcounts could shortchange families who were present but invisible to the tally.

The strongest responses acknowledge census strengths while naming specific gap mechanisms rather than declaring the census meaningless.

Geotagged layers feel futuristic, yet they inherit every smartphone-era divide.

Application sentence: “A heat map built from restaurant check-ins displays where influencers dine—not necessarily where cash-pay workers eat after late shifts.” Saying whom the layer represents prevents overgeneralization.

Use this skeleton until muscle memory takes over:

Data source → Bias or limitation → Who or what is missing → Impact on conclusion

Annotated example: “Geotagged social posts only capture users who publish publicly and enable location sharing. People without smartphones—or those avoiding surveillance—vanish from the layer, so planners estimating downtown foot traffic may exaggerate smartphone-heavy demographics.”

This paragraph wins points because it identifies mechanism, affected populations, and downstream distortion.

Spot flawed sampling, outdated census years, or misleading aggregation on MCQs.

Critique a dataset before accepting map patterns; propose what is missing.

Tables with margins of error, maps with footnotes, paired qualitative quotes.

Strong AP answer structure: Source → Method → Coverage gap → How bias skews the map → Fix / caution.

Data is biased when:

Every fifth card transition shows an ad placeholder with a three-second countdown before the next card appears.

Use the score card to track accuracy. After every fifth answered question you will see an ad placeholder with a three-second countdown before the next question loads.

Prompt: A city government uses an online survey to decide where to add new bus routes. Most responses come from residents in wealthy neighborhoods with high internet access.

A. Data bias occurs when data overrepresents or underrepresents certain people, places, or groups, leading to misleading conclusions.

B. The survey may have technology bias because it was conducted online. Residents without reliable internet access may be less likely to respond, so lower-income neighborhoods may be underrepresented.

C. The city may add bus routes in wealthy neighborhoods where more people responded, even if lower-income neighborhoods have a greater need for public transportation. This could lead to unequal access to services.

D. The city could improve reliability by using a representative sample across all neighborhoods and offering the survey in multiple formats, such as paper, phone, online, and in-person collection.

Part A: Credit mentions of overrepresentation or underrepresentation tied to misleading conclusions.

Part B: Credit naming the bias type plus identifying underrepresented residents.

Part C: Credit transportation consequences such as unequal service or misallocated routes.

Part D: Credit concrete fixes—probability sampling, multilingual outreach, offline modes.

Stopping after “online surveys are biased,” forgetting equity impacts, or proposing vague “better data” without tactics.

Data reliability means how trustworthy, accurate, complete, and consistent geographic data is. Reliable data can be used as evidence to support geographic conclusions.

Data bias happens when data does not fairly represent the people, places, or patterns being studied. It may leave out certain groups or overrepresent others.

Surveying only people at a train station to estimate how an entire city commutes overrepresents public transit users and leaves out drivers, cyclists, pedestrians, and remote workers.

It can be unreliable if it's outdated, incomplete, collected from a biased sample, gathered using weak methods, or analyzed at the wrong scale.

Scale matters because data at one scale can hide patterns at another scale. A national average may hide regional, city-level, or neighborhood-level differences.

Yes. Quantitative data can be biased if the numbers come from a flawed sample, outdated source, incomplete count, or misleading categories.

Yes. Census data can undercount homeless populations, undocumented migrants, remote communities, or people who don't respond.

Maps can be biased through choices about color, scale, projection, symbols, categories, class breaks, and what data is included or excluded. A choropleth map using dark colors can make small differences look dramatic.

Explain who or what is missing, why the data is not representative, and how that could affect the conclusion. Use the structure: source → bias → who's missing → impact.

Because the people who respond to a survey often differ from those who don't. If only motivated, available, or tech-equipped people respond, the data tilts toward their views.

S-S-S-T-M: Source, Sample, Scale, Time, Method. If a question asks about reliability or limitations, name one of these five and explain the impact.

Treat this microtopic as living vocabulary—reuse these habits whenever stimuli combine maps, tables, interviews, or timelines.

Read legends, scales, units, and captions together—decide whether evidence supports a regional trend or a misleading aggregation inside one polygon.

Population change, cultural diffusion, borders, rural systems, urban service gaps, and economic indicators all reward the spatial precision you practice in Unit 1.

Name the place, pull a detail from the stimulus, connect to a course concept, and end with a consequences sentence—skip definition dumps.

Call out who collected the data, at what geography, and when. Note missing groups when quantitative and qualitative pieces disagree.