Pair sources before you lock an answer

Read legends, scales, units, and captions together—decide whether evidence supports a regional trend or a misleading aggregation inside one polygon.

Survey Data and Sampling in AP Human Geography explains how this topic appears across places and scales. Use it to interpret map evidence, compare spatial patterns, and write precise AP-style geographic explanations.

Practice with real AP Human Geography examples, compare spatial evidence across maps, and review with 22 flashcards plus 16 AP-style questions with explanations.

Learn in 7 mins · Practice in 10 mins

Survey sampling decides who answers questionnaires—probability samples strive for known chance of selection, while convenience samples overweight easy respondents. Reporting bias, spatial gaps in coverage, and question wording shape whether mapped results generalize, which AP statistics items probe through sampling vocabulary.

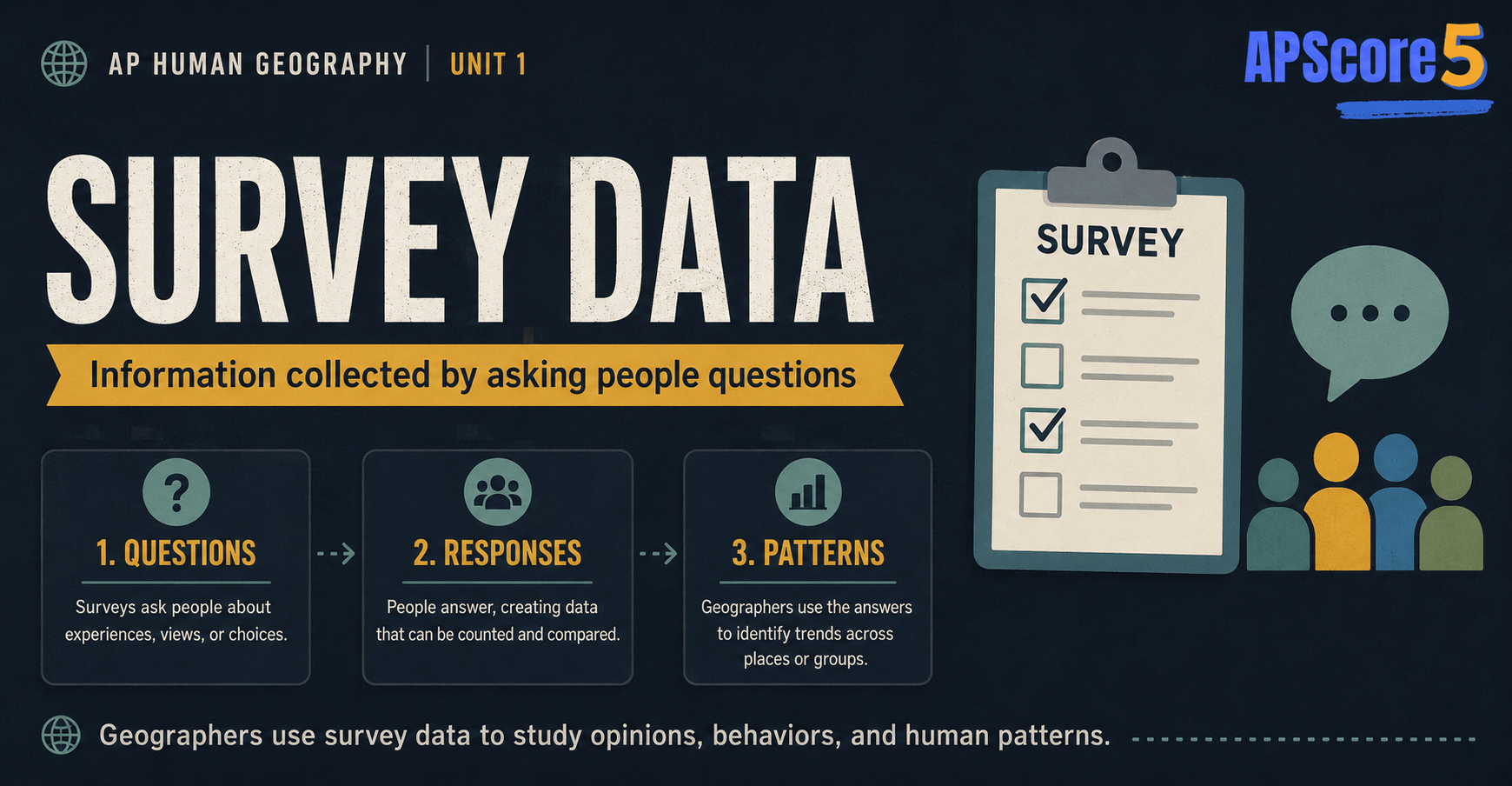

Definition: Survey data is information collected from people by asking questions. Geographers use survey data to study opinions, behaviors, movement patterns, needs, perceptions, and experiences across places.

Survey data can be collected through online forms, phone interviews, paper questionnaires, in-person interviews, classroom surveys, community forums, door-to-door research, or app-based questionnaires. Each mode reaches different people. A paper survey mailed with utility bills might reach seniors who ignore Instagram polls; a student-led hallway survey might capture adolescent attitudes but tell you little about parents who work night shifts.

Simple example: A geographer asks 500 residents how they commute to work. Their answers—minutes on the road, mode choice, satisfaction ratings—are survey data whether recorded on a tablet or scribbled on clipboards.

Pair that commute survey with mapped transit lines and you suddenly see whether frustration clusters far from rail corridors—a synthesis move graders reward when stimulus maps appear beside quotation snippets.

| Method | Risk |

|---|---|

| Random | Lower bias if response rates hold |

| Stratified | Ensures subgroups represented |

| Convenience | Often skewed toward accessible places |

| Snowball | Hard-to-reach networks; not generalizable alone |

Survey data is information collected by asking people questions. In AP Human Geography, surveys help geographers understand human behavior, opinions, movement, culture, preferences, and access to services. They turn invisible attitudes—fear of crime near a new bike lane, excitement about a festival, frustration with a bus schedule—into evidence you can map, compare, and write about on an FRQ.

But survey data is only useful if the sample is strong. A poorly chosen sample can lead to misleading conclusions. That is why sampling—the process of picking who to ask—matters as much as the survey itself. Examiners love items that pair a friendly scenario with a hidden bias. Your job is to name the gap between who answered and who the policy affects.

Surveys are how geographers get inside people’s heads—opinions, perceptions, reasons for migrating, sense of place—none of which appear on a satellite image or in a census table. But every survey involves choices, and those choices can introduce bias. Mastering this topic is one of the most high-impact study moves you can make for Unit 1 FRQs: you get vocabulary, a repeatable writing frame, and a way to critique almost any map or policy chart that mentions “community input.”

When you read a newspaper story that says “62% of residents support the new mixed-use project,” your geography brain should immediately ask: Which residents? How were they contacted? Who refused to answer? Those questions are not cynicism—they are the skill.

Metropolitan planning departments, university labs, and advocacy nonprofits all publish survey-backed maps: commute satisfaction by census tract, perceived safety near transit stops, or grocery shopping radius by income band. Learn to read those figures as layers tied to sampling choices, not as neutral truths floating above place.

Human geography studies people and places. Many geographic questions require understanding what people do, think, prefer, or experience. Surveys help collect that information directly from people rather than inferring it only from buildings or counts.

Geographers use survey data to study questions like:

Survey data is especially useful when geographers need information that is not visible on a map or in a table of quantitative geographic data. Pair closed-ended counts with open-ended explanations and you can narrate both what is happening and why it matters politically or culturally.

Urban planners, transit agencies, school districts, and nonprofits routinely combine spatial layers with survey waves. A heat map of grocery stores shows supply; a household survey shows whether residents can afford time and money to reach those stores. Without both, food-access policy drifts toward guesses.

Think about environmental justice debates: residents may report odors or asthma flare-ups that never appear in aggregate emissions databases. Surveys do not replace sensors, but they surface lived experience that shapes political pressure and citation-worthy qualitative evidence.

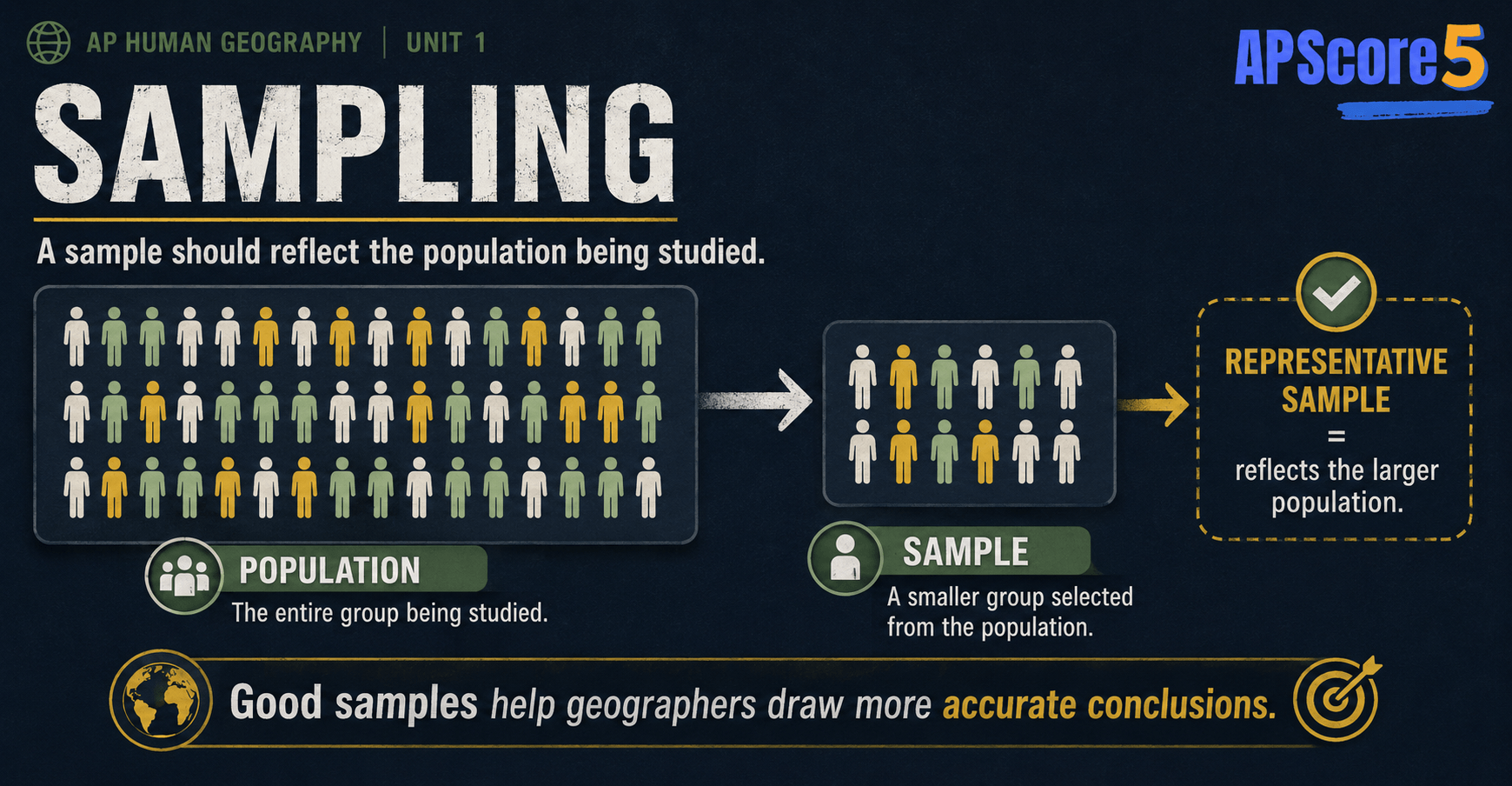

Sampling is the process of collecting data from a smaller group of people to represent a larger population. A geographer usually cannot ask every person in a city, country, school district, or region. Instead, the geographer surveys a sample.

Example: A city has 500,000 residents. A geographer surveys 1,000 residents about public transportation. Those 1,000 people are the sample. Whether they fairly represent all 500,000 depends on how they were chosen—not automatically on the fact that 1,000 sounds big.

On exams, always connect sampling language to geographic scale. A sample drawn from one ZIP code is fine if the research question is about that ZIP code; it fails if someone claims the results describe the entire metropolitan area.

Spatial sampling can also mean selecting places—random census tracts, stratified neighborhoods, or transects along a corridor—before surveying people inside those units. Mentioning geographic stratification signals AP-level thinking beyond “we asked some folks.”

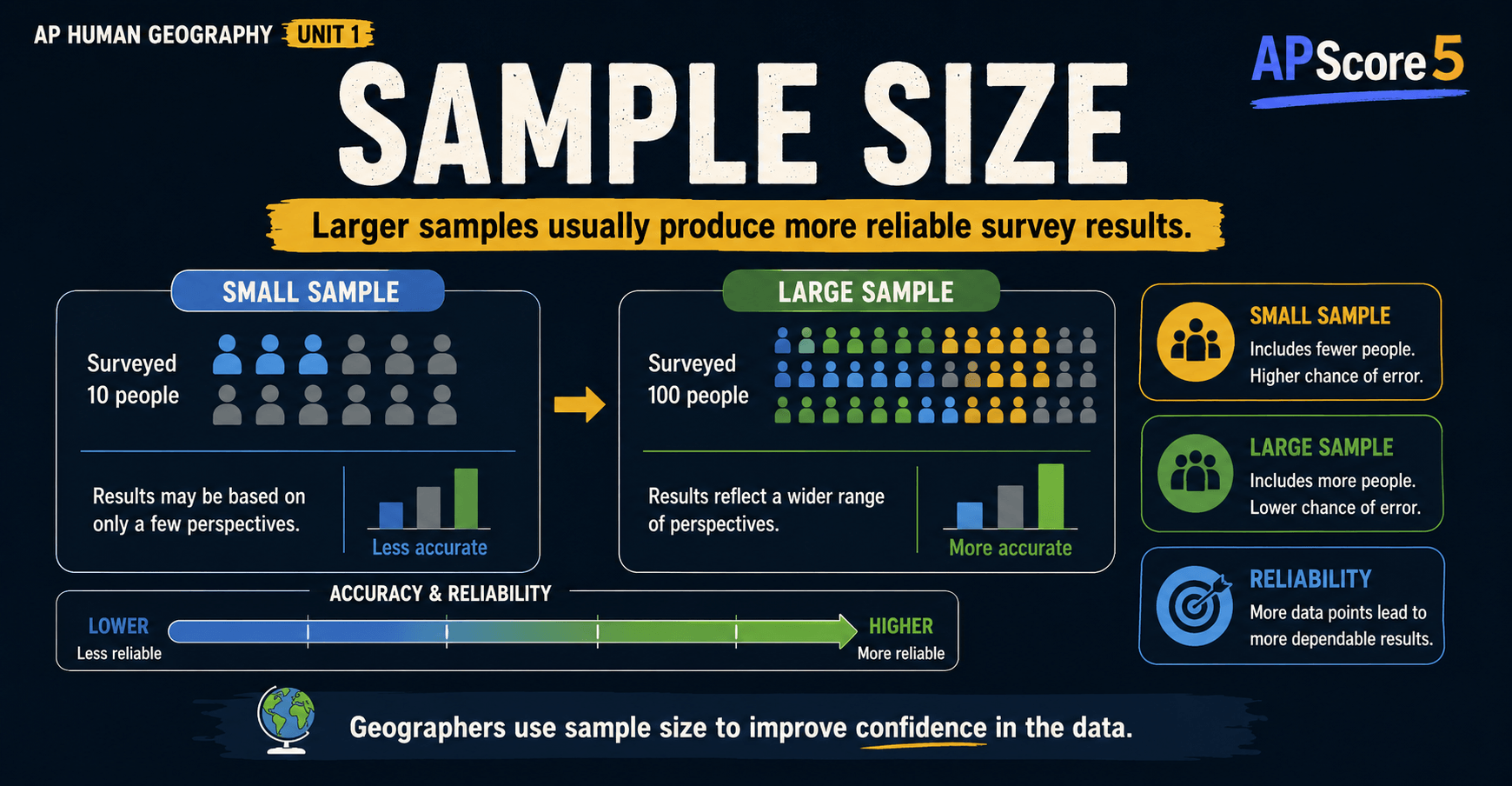

Sample size is the number of people included in a survey. In general, a larger and more representative sample gives more reliable results. A tiny sample can be misleading because it may not reflect the larger population’s diversity of income, age, language, or neighborhood context.

Important AP idea: A large sample is not automatically good. The sample also needs to represent the full population.

For example, surveying 5,000 people from one wealthy suburb does not represent an entire metropolitan area. The number is large, but the sample is not representative—and that is the failure that matters on rubrics.

Think of sample size as the denominator for statistical stability and representativeness as the guarantee that the numerator includes each major slice of geography you care about—inner core, first-ring suburbs, rural fringes, renter-heavy blocks, and so on.

Intro statistics classes teach confidence intervals; AP Human Geography usually stops at conceptual clarity—yet dropping a sentence like “results might swing wildly with only twelve surveys” can earn sophistication points when prompts highlight a minuscule n.

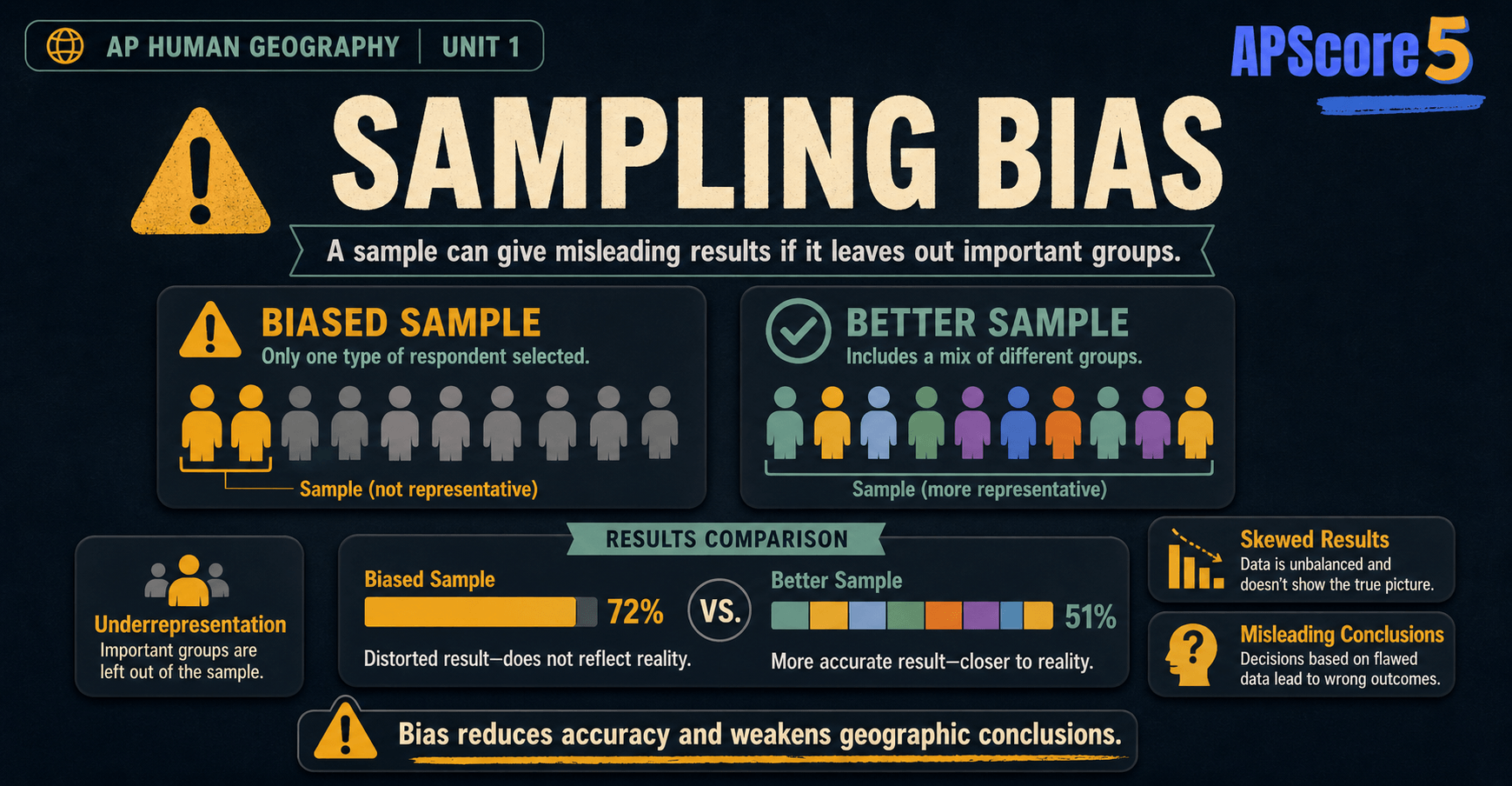

Sampling bias happens when the people surveyed do not accurately represent the larger population being studied. If a sample leaves out important groups, the results can be misleading even when charts look polished.

Example: A city wants to know how residents travel to work. Researchers survey only people at a downtown train station.

That survey may overrepresent people who use trains and underrepresent people who drive, bike, walk, work from home, or live far from transit. The results could imply “most residents use the train” only because the sample never included anyone who did not pass through that station during fieldwork hours.

For a fuller treatment of how sampling bias fits into the larger family of data problems, see data reliability and bias in AP Human Geography.

Time-of-day bias deserves its own sentence: intercept surveys at lunch hour miss night-shift workers; weekend park intercepts miss Monday-through-Friday office commuters. Spell out the temporal blind spot and you sound like a working researcher, not a textbook parrot.

Random sampling is a method where every person or location in the population has an equal chance of being selected (with adjustments for complex designs in advanced research). Random sampling helps reduce bias because the researcher is not choosing only convenient or preferred respondents.

A geographer studying food access randomly selects households from different neighborhoods and asks residents how far they travel to buy groceries.

This design is stronger than only surveying shoppers at one grocery store, because the sample is not tied to a specific kind of trip or neighborhood. Store-intercept surveys still appear in real life—they are cheap—but AP prompts reward students who spot that they overweight people who already reached food sources.

Random sampling makes survey results more reliable because it reduces the chance that only one type of person or place is represented. It is the standard answer when an item asks how to reduce sampling bias, especially compared with convenience sampling at malls, stadium gates, or viral links.

Remember limitations too: random sampling needs an accurate list of the population (the sampling frame). If housing records omit informal dwellings, “random” picks still miss whole communities.

Stratified random sampling—random draws inside each major neighborhood type—shows up in higher-level work; if a stimulus mentions stratification, explain that it protects representation across urban zones before randomizing within each stratum.

Survey data can be quantitative, qualitative, or both.

Simple rule: If the survey answer is a number, percentage, or category that can be counted, it is quantitative. If the answer is a description, explanation, or personal experience, it is qualitative.

| Quantitative survey answer | Qualitative survey answer |

|---|---|

| “I commute 35 minutes.” | “My commute is exhausting because of construction.” |

| “We have 4 people in the household.” | “My household feels crowded since my parents moved in.” |

| “Income range: $45,000 to $60,000.” | “We’ve felt more financial pressure since rent went up.” |

A well-designed geographic survey often collects both. The numbers reveal patterns; the descriptions reveal why the patterns exist. Mixed-methods studies might map quantitative averages by census tract while pulling quotations from open-ended items to humanize the statistics for policymakers.

Likert-scale questions (“rate agreement 1–5”) land in a gray zone: treat them as quantitative summaries but recognize they compress nuanced feelings into ordinal buckets—mention that limitation when critiquing methodology.

A geographer wants to study whether residents in a city have equal access to grocery stores. The geographer surveys 1,200 residents across the city.

The sample includes residents from different neighborhood types—high-income suburbs, low-income inner-city areas, mixed-density neighborhoods, and outer suburbs. This makes it more representative than surveying only people near one grocery store.

Survey data could reveal that low-income residents travel farther for groceries and have less access to fresh produce. This may suggest a food desert or unequal access to services. Combined with GIS-mapped store locations, the geographer can identify neighborhoods that lack fresh-food retailers within a short distance.

Because it includes residents from different neighborhood types, it does not accidentally exclude the people most affected by the issue. The sample mirrors more of the city’s diversity—though researchers should still watch for language barriers, digital divides on online instruments, and households without stable addresses.

Commute studies, park-use surveys, and food-access audits show up constantly in AP stimuli. Practice narrating how survey layers combine with cartographic layers so you can earn both knowledge and synthesis points.

City governments sometimes pair food-access surveys with participant-drawn mental maps; even if the prompt does not show sketches, mentioning sketch-map exercises proves you know participatory GIS complements standardized questionnaires.

When you evaluate survey reliability on an FRQ, stack these criteria against the scenario. Mention at least two concrete flaws—location bias plus technology bias, for instance—rather than stopping at “bad sample.”

Weighting and post-stratification show up in professional reports; you rarely need formulas, but noting that agencies sometimes adjust results to match census demographics signals mature reasoning.

Naming the bias is step one; linking it to who disappears from the dataset is step two. That pairing is what separates partial credit from full credit.

Response bias umbrella terms sometimes confuse students: focus on the mechanism—what process excluded or warped answers—rather than dumping vague vocabulary.

When explaining sampling bias, always identify:

Weak answer: “The survey is biased.”

Strong answer: “The survey is biased because it only included people at a downtown train station. This leaves out residents who drive, walk, bike, work from home, or live far from rail lines, so the results may overestimate public transit use.”

That is the kind of answer AP graders want. The pattern is identify → explain who is missing → predict the distortion.

Practice translating each bullet into geographic language: instead of “drivers missing,” say “people without rail-adjacent jobs along peripheral suburban corridors,” tying bias critique back to spatial structure.

| Feature | Survey Data | Census Data |

|---|---|---|

| Goal | Study a sample of the population | Count or describe the whole population |

| Source | Researchers, governments, businesses, NGOs | Usually a national government |

| Frequency | Variable (one-time, periodic, ongoing) | Usually every 10 years |

| Sample size | Hundreds to thousands | Entire population |

| Strengths | Captures opinions, behaviors, perceptions | Official, broad, demographic |

| Weaknesses | Sampling bias, nonresponse, technology bias | Undercounting, outdated between cycles |

For the full picture on the other side of this comparison, see census data in AP Human Geography.

American Community Survey (ACS) estimates blend census infrastructure with sampling—if a stimulus mentions ACS, note that margins of error exist because it is survey-based, unlike decennial enumeration goals.

Another trap: treating every online poll as equally worthless. Point to specific coverage gaps instead of sneering at the internet—sometimes hybrid designs nail representation.

Define sampling types, detect bias from selection, connect surveys to mapped results.

Explain how a sampling frame shapes mapped findings or policy claims.

Survey excerpts with margins of error; urban-only interview panels.

Strong AP answer structure: Question → Sample design → Bias risk → How maps could mislead.

A random sample means:

Every fifth card transition shows an ad placeholder with a three-second countdown before the next card appears.

Use the score card to track accuracy. After every fifth answered question you will see an ad placeholder with a three-second countdown before the next question loads.

Prompt: A city wants to understand why some neighborhoods have lower access to public parks. Researchers survey 1,000 residents about park use, travel distance, safety concerns, and transportation access. Most survey responses come from residents who completed an online form.

A. Survey data is information collected by asking people questions about their behaviors, opinions, experiences, or needs.

B. Survey data could show how far residents travel to reach parks, whether they feel safe using parks, and whether transportation limits their access. This helps identify which neighborhoods face barriers to park use.

C. The survey may have technology bias because most responses came from an online form. Residents without reliable internet access, older residents, or lower-income residents may be underrepresented.

D. Even though 1,000 responses may seem large, the results can still be biased if the sample does not represent the whole city. If responses mostly come from internet users or certain neighborhoods, the survey may not accurately reflect residents with the greatest park access problems.

Part A (1 pt): Must mention “asking people questions” and the kinds of information collected.

Part B (1 pt): Must connect survey data to specific decisions about park access.

Part C (1 pt): Must name a specific bias source AND explain who is underrepresented.

Part D (1 pt): Must explain that representativeness, not size alone, drives reliability.

Stopping at “online is biased” without naming skipped groups, or praising the n=1,000 without questioning geography.

Survey data is information collected by asking people questions. Geographers use it to study opinions, behaviors, experiences, needs, and movement patterns across places.

Asking residents how they commute to work, how far they travel for groceries, or why they moved to a city. The answers—both numerical and descriptive—are survey data.

Sampling is collecting data from a smaller group of people or places to represent a larger population. Geographers use it because they cannot ask every person in a city or country.

Sample size is the number of people or locations included in a survey. Larger sample sizes can improve reliability, but only if the sample represents the population.

Sampling bias happens when a survey includes people or places that do not represent the larger population. For example, surveying only train riders will overestimate public transit use.

Sample size matters because very small samples may produce unreliable results. However, a large sample can still be biased if it leaves out important groups.

Random sampling is a method where every person or location has an equal chance of being selected. It helps reduce sampling bias.

Yes. Survey data can be quantitative if answers are numerical, such as commute time, household size, income, or distance traveled.

Yes. Survey data can be qualitative if answers are descriptive, such as explaining why someone migrated or how they feel about neighborhood change.

They collect information directly from people. They help geographers understand behaviors, opinions, perceptions, and needs that may not appear in maps or census data.

A survey samples a portion of the population. A census tries to count or describe the entire population, usually through an official government process.

Treat this microtopic as living vocabulary—reuse these habits whenever stimuli combine maps, tables, interviews, or timelines.

Read legends, scales, units, and captions together—decide whether evidence supports a regional trend or a misleading aggregation inside one polygon.

Population change, cultural diffusion, borders, rural systems, urban service gaps, and economic indicators all reward the spatial precision you practice in Unit 1.

Name the place, pull a detail from the stimulus, connect to a course concept, and end with a consequences sentence—skip definition dumps.

Call out who collected the data, at what geography, and when. Note missing groups when quantitative and qualitative pieces disagree.